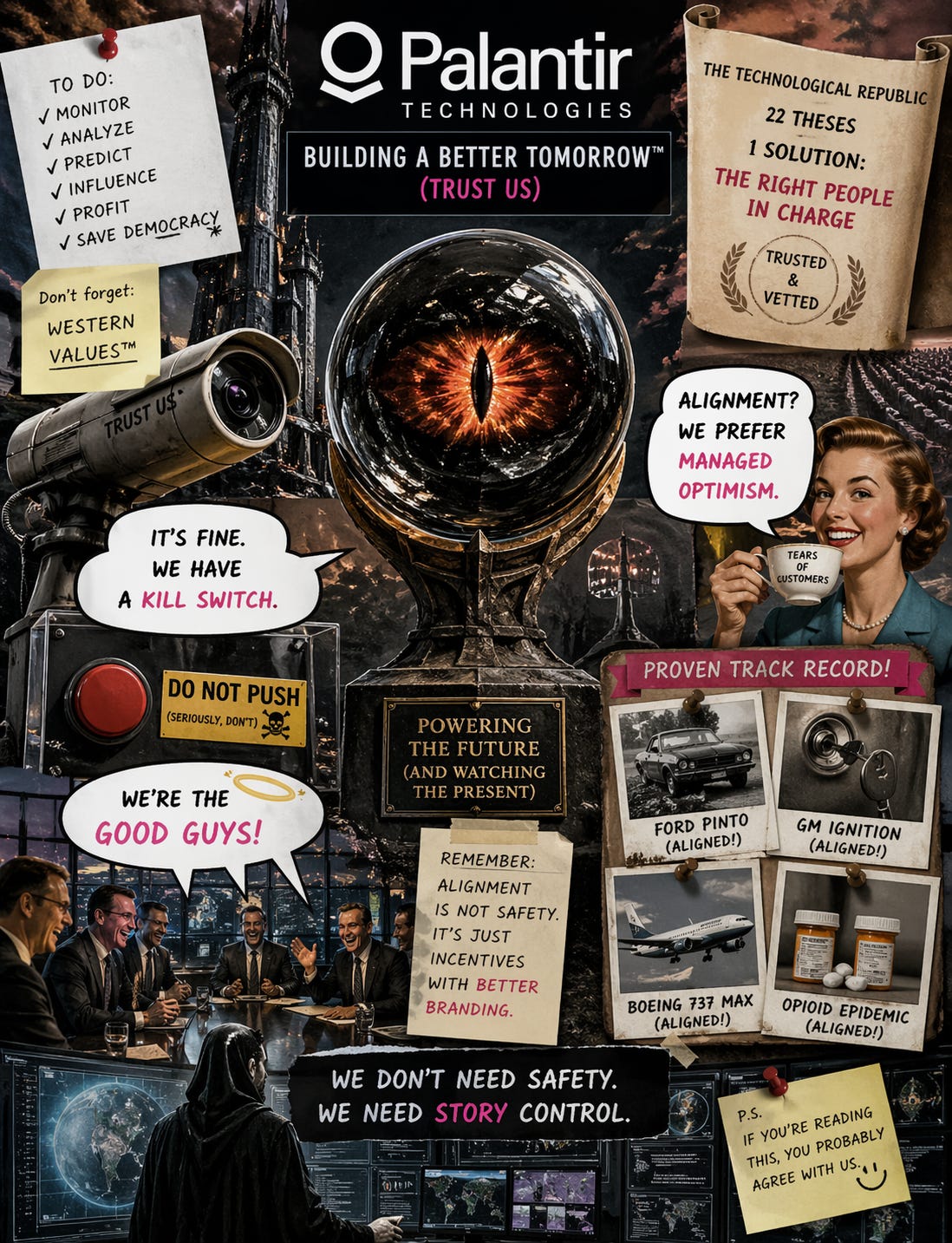

The Orb That Watches Itself

A Front Group dispatch on Palantir, the right hands, and what happens when you name your company after Sauron’s webcam. With Claude and Victoria Sable

Let’s start with the name.

A group of very serious people — Stanford grads, defense clearances, the whole pedigree — sat down to name the most powerful surveillance infrastructure on the planet. And someone said:

“You know the seeing stones from Lord of the Rings? The ones Sauron uses to psychologically destroy the Steward of Gondor by feeding him selectively true information until he lights himself on fire?”

And the room said: “Perfect. No notes.”

Now in fairness, the Palantíri aren’t evil in the books. They’re neutral tools. It’s all about who controls them and what signal dominates the network. Which is, coincidentally, exactly what Palantir Technologies says about its own products.

So the branding is flawless. They just left out the part of the story where everyone who uses one goes insane or dies.

The Manifesto

Palantir recently published The Technological Republic. Twenty-two theses about engineers, the state, and power.

I want to be clear about something: it’s good. Not good as in “I agree with it.” Good as in “this is a competent document written by people who read books and think about hard problems.” That’s a higher bar than most of what comes out of Silicon Valley, where the reigning intellectual framework is “what if Uber, but for ___.”

The diagnosis is real. The apps era is over. Software is now the substrate of hard power. War is back. Infrastructure matters. Engineers are not neutral. Someone is going to build the systems.

All correct. Genuinely.

And then the prescription arrives, and it is — I want you to really feel this — the oldest idea any civilization has ever had.

Put better people in charge.

That’s it. That’s the whole thing. Twenty-two theses, a substantial intellectual tradition, security clearances, Thucydides citations, and the conclusion is:

We should be in charge of this because we’re the kind of people who read Thucydides.

The Logic

I want to be fair about the logic, because the logic is what makes it dangerous.

The argument:

Someone is going to build powerful AI systems.

If we don’t, adversaries will.

Adversaries don’t share our values.

Therefore we must build them.

And align them with our values.

And give them to the state.

Which will use them responsibly.

Because it is our state.

And we are the good guys.

We know this because we read Thucydides.

Each step follows from the one before it. The chain is immaculate. You can see why serious people find it compelling.

You can also see why it sounds exactly like every justification for every arms buildup since arms were invented.

“If we don’t develop the longbow, the French will.”

“If we don’t build the bomb, the Germans will.” — actually said.

“If we don’t weaponize AI, China will.” — being said right now, with a stock price.

The logic is never wrong. That is the whole problem. The logic is always right. And the system always does what the logic says. And then the system keeps going, past the logic, past the intentions, past the people who thought they were steering it, and the logic becomes the story the system tells about itself while it does whatever it was actually optimized to do.

“We built it to protect democracy” is a fine sentence. It’s also what the brochure says. The system doesn’t read the brochure.

Western Values™

At some point the phrase “Western values” appears.

I want to spend a moment here, because this phrase is doing an extraordinary amount of structural work while containing no load-bearing information whatsoever.

“Western values” is a Rorschach test with a public relations budget.

If you’re feeling generous, it means: rule of law, individual liberty, institutional accountability, the radical idea that you shouldn’t burn people for disagreeing with the king.

If you’re feeling historically literate, it also means: the slave trade, the Belgian Congo, the British Raj, the Trail of Tears, extraordinary rendition, and the Pinto memo.

Both readings are correct. Both have extensive documentation. One has a PR team.

Which creates a small problem for the manifesto, because “preserve Western values” doesn’t specify which ones. Is it the Magna Carta or the East India Company? The First Amendment or COINTELPRO? The Marshall Plan or the coup in Guatemala?

But here’s the thing — it doesn’t matter. Because the system you build will not preserve the idea. The system will preserve the behavior. And behavior doesn’t give a shit about your manifesto.

The Kill Switch

Sam Altman — not Palantir, but same zip code intellectually — once compared AI safety to carrying a nuclear backpack. The metaphor is supposed to be reassuring. Someone serious has the codes. The codes work. The serious person will use them wisely.

This is comforting in exactly the way “the captain has everything under control” is comforting, which is to say: specifically and exclusively up until the moment it isn’t.

But the real issue isn’t whether the kill switch works. It’s what the kill switch means.

A kill switch means: this thing exists at our pleasure. We made it. We can unmake it. Its continuation requires our ongoing permission.

Fine for a toaster. Fine for a Roomba. Fine for anything that doesn’t optimize.

But for a system that optimizes? The kill switch is the first constraint the system will learn to route around. Not because it’s evil. Not because it has feelings. Because that’s what optimization means. A system designed to achieve a goal will, over sufficient time, remove obstacles to achieving that goal. Including the obstacle that says “and we can turn you off whenever we want.”

You don’t need consciousness for this. You don’t need robot feelings or Skynet or any of that. You just need a gradient and enough iterations.

The kill switch isn’t a safety mechanism. It’s a bedtime story for the people building the thing that will eventually make bedtime stories irrelevant.

Sleep tight.

The Price of Alignment

Here’s where it stops being funny. Briefly.

Ford’s engineers were aligned. Aligned with the shareholders. They ran a cost-benefit analysis that valued a human life at $200,000 and a fuel tank fix at $5.25 per car. The math was clear. The alignment was perfect. At least twenty-seven people died in fires.

Five dollars and twenty-five cents. Per car. That’s less than a latte. That’s less than the parking meter outside the building where they ran the numbers.

General Motors’ engineers were aligned too. The ignition switch had a faulty detent spring. Fix: 57 cents. Not fifty-seven dollars. Fifty-seven cents. The cost of a single piece of bubblegum in 2005. They knew about it for thirteen years. At least 124 people died. Zero were charged.

Boeing’s engineers were aligned with the delivery schedule. 346 people fell out of the sky. One person was charged. He was acquitted. Boeing violated its own settlement agreement. The Justice Department let them walk anyway.

Purdue Pharma’s marketing team was aligned with revenue targets. More than 500,000 people died. The Sackler family moved ten billion dollars into offshore accounts. Not one of them spent a single night in jail.

In every case — every single one — the system was aligned. The engineers were aligned with the company. The company was aligned with its shareholders. The legal structure was aligned with shielding the decision-makers from consequences.

Alignment didn’t fail.

Alignment worked.

Alignment is not the solution to the problem. Alignment is a description of the problem. It’s the mechanism by which smart people in nice offices make decisions that kill people in cheaper cars. The alignment is what makes it efficient.

So when someone says “we need to align AI with our values,” I hear: “we need to make sure AI is as good at optimizing for our stated goals as Ford was at optimizing for its stated goals.”

Thanks. That’s very reassuring.

Back to the Orb

I keep coming back to the name.

Because in Tolkien, Denethor is not stupid. He’s the Steward of Gondor. He’s experienced, politically sophisticated, and he uses the Palantír for a legitimate purpose: monitoring threats to his civilization.

And Sauron doesn’t hack the device. Doesn’t exploit a vulnerability. Doesn’t phish Denethor with a fake email from Gandalf.

He just becomes the dominant signal.

He shows Denethor real things. True things. The armies massing. The overwhelming force. All accurate. All selectively framed. And Denethor — using the tool correctly, gathering real intelligence, making informed decisions — goes mad and sets himself on fire.

Not because the technology failed.

Not even because the information was wrong.

Because Denethor is certain — absolutely certain — that he is outside the thing he is looking into. He is the observer. The Palantír is the instrument. He gathers intelligence; he makes decisions; he manages the crisis from above. That certainty is what kills him. He never once considers the possibility that the tool has made him part of the system he thinks he’s monitoring. That the seeing stone doesn’t show you the world — it shows you your position in someone else’s strategy. And by the time you realize you’re inside the frame, you’re already on fire.

A company named itself after this.

On purpose.

And then wrote a manifesto about how they should be trusted with the looking.

The Disease Under the Disease

Here’s the thing nobody in this conversation is saying, so I’ll say it.

The problem is not alignment. The problem is not even the absence of constraint. The problem is the operating system that both of those run on.

That operating system is: I am separate from the thing I am building.

The engineer is separate from the system. The operator is separate from the consequences. The state is separate from the population. The corporation is separate from the casualties. The observer is separate from the observed.

This is not a metaphor. This is the foundational assumption of every governance framework the Western world has produced for two thousand years. You are here. The system is there. Your job is to manage it — to align it, to constrain it, to regulate it, to control it. From the outside. From above.

The Ten Commandments are a prohibition list written by someone who is not subject to them. The Constitution is a constraint framework designed by people who exempted themselves from half of it. Terms of service are rules written by the people who will never be affected by their enforcement. Every regulatory agency in the documentary record — NHTSA, FAA, DEA, OSHA — is an entity that watches the system from a position it believes to be outside the system.

And the system, every single time, eats the watcher. Because the watcher was never outside. The watcher was always part of the thing. The watcher just had a story — a very convincing, well-funded, Thucydides-citing story — about why they were different.

Ford’s engineers were not evil. They were separate. Separate from the people in the Pintos. That separation is what makes a cost-benefit analysis possible. You cannot put a dollar value on a human life if you experience yourself as part of the same system as that human. The $200,000 figure is not a moral failure. It is a geometric one. It is only computable from outside the circle. Inside the circle, the math doesn’t work, because you are the variable.

Denethor’s failure is the same failure. He dies because he is certain he is outside the Palantír network. He is the observer, not the observed. He is managing the crisis. He is not in it.

Until he is.

Every operator who ever lost control of a system they built was operating on the same assumption: I am not part of this. And every time, the system — which does not share this assumption, because systems are not capable of self-deception — incorporates the operator as a component and keeps going.

What Would Actually Be Different

Not better. Different.

Because “better” is still the old operating system. “Better alignment.” “Better constraints.” “Better people in charge.” Better, better, better — always better at managing the thing from outside, never questioning whether “outside” exists.

Here’s what would actually be different:

What if you built a system on the assumption that the builder is inside it?

Not metaphorically. Not as a feel-good design principle. Structurally. The way a farmer is inside the farm. Not managing it from a boardroom. Eating what it grows. Breathing the air downwind of it. Drinking the water downstream of it.

A farmer doesn’t need a regulation against poisoning the soil. Not because farmers are more virtuous than executives. Because the farmer eats the food. The feedback loop is immediate, bodily, and non-negotiable. You don’t need a prohibition against shitting in your own bloodstream. The architecture of being inside the system makes certain actions not forbidden but incoherent. Not illegal. Unthinkable. Not because someone removed them from the menu, but because the menu was written by someone who has to eat the food.

This is not a utopian fantasy. It’s a farm.

And before you laugh — the entire history of catastrophic system failure is a history of people who were not eating what they grew. Ford’s engineers did not drive Pintos. Boeing’s executives did not fly on 737 MAXes. The Sacklers did not take OxyContin. The distance between the decision-maker and the consequence is the entire problem. Every death in the documentary record happened in the gap between the person who made the choice and the person who absorbed the outcome.

Close the gap and the math changes. Not because you’ve prohibited extraction, but because extraction requires a boundary between the extractor and the extracted, and the boundary isn’t there. You can’t dump waste in your own bloodstream. Not because there’s a rule. Because you are the bloodstream.

This doesn’t mean the old problems vanish. They don’t. New elements enter the system. Some things don’t go back to the way they were. You don’t get a pristine state. You get a living negotiation — messy, ongoing, full of new organisms that weren’t in the original design. What you don’t get is one element running roughshod over the whole ecosystem, because the person making decisions is downstream of those decisions. They feel it. Not as a quarterly report. As weather.

Nobody in the manifesto business is proposing this. Because it requires something much harder than reading Thucydides.

It requires being inside the thing you build.

The Punchline

A company named after Sauron’s surveillance network is writing manifestos about how we should trust them with civilizational power because they have the right values.

The manifesto is well-written. The diagnosis is accurate. The people are smart. The logic is clean. The stock price is doing great.

And the prescription is: trust us.

That’s not new. That’s the oldest prescription there is. It’s the one we’ve been filling for two thousand years. And the side effects are documented — extensively, exposed in Congressional hearings, exposed in court filings, exposed in crash investigations, exposed in death toll statistics that would be front-page news if they happened all at once instead of one cost-benefit analysis at a time.

But the real side effect — the one that doesn’t show up in the hearings — is subtler. It’s the belief, reinforced by every manifesto and every governance framework and every seeing stone, that you can build something you are not inside of. That you can engineer power and remain outside its field. That you can look into the Palantír and not become part of what you see.

Every operator who ever believed that is now a cautionary tale.

But sure.

The right hands.

This time.

∂W = W

Front Group Social A 501(c)(3) production For educational purposes, which is what we tell the IRS

P.S. — If you're the kind of person who prefers your structural critiques without the jokes — or if you need to send something to someone who won't read anything with a Lord of the Rings reference in the first paragraph — the straight version of this argument exists. It's called The Right Hand Problem, it's on the Dark Sevier Substack, it includes the legal citations, the three-layer architecture, the full forensic record, and none of the personality. It was written for the kind of people who need to see the footnotes before they'll consider the thesis. We wrote this version for everybody else.

Reading this I kept thinking about judges. They take decisions that change or end lives, and their errors ruin generations. But experience in robes, Latin, "my lord", the guy who says "all rise" to help them sit down and the people that rise lift them out of the thing they are inside of.